Local Inference Without RAM Limits: How Hypura Streams 70B Models from NVMe

Hypura is essentially a smart paging layer, but one that's model-architecture-aware rather than generic OS-level swap. The OS doesn't know that a model's attention norms are accessed every single token while Mixture-of-Experts (MoE) weights are accessed sparsely—only 2 of 8 experts fire per token. Hypura does. And that knowledge enables placement decisions that are dramatically smarter than naive mmap or swap.

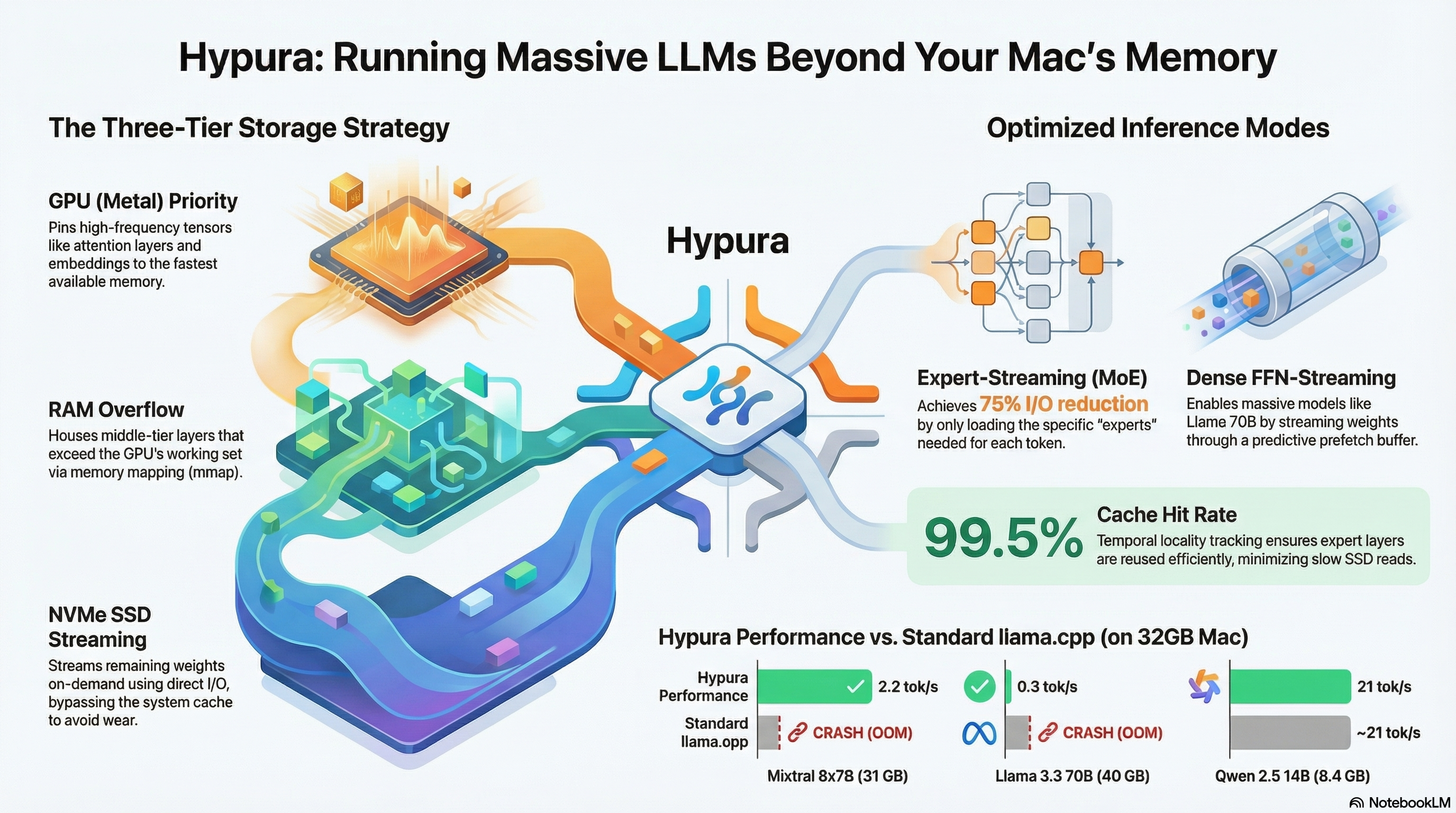

Three Storage Tiers, Three Modes

Hypura doesn't apply a single strategy to all models. It uses a placement solver to assign every tensor to a tier based on access frequency, bandwidth costs, and hardware profiling—then picks one of three operating modes:

1 Full-Resident

When the entire model fits in GPU + RAM, Hypura stays out of the way entirely. Zero overhead compared to plain llama.cpp. This mode exists so you can use Hypura as a universal launcher without paying a penalty for smaller models that fit comfortably.

2 Expert-Streaming (MoE models)

Built for models like Mixtral 8x7B. The ~1 GB of non-expert tensors (attention, norms, embeddings) stays permanently GPU-resident—these are accessed on every token and evicting them would be brutal. The expert weight blobs, which are large but accessed sparsely, stream from NVMe on demand.

The key enabler here is router interception: Hypura hooks into the eval callback to see which experts the router selects before it needs to load them. This lets it prefetch the right expert weights ahead of time using co-activation tracking—observing which expert pairs tend to fire together and predicting the next selection from observed patterns. The result is a 99.5% cache hit rate from temporal locality, and roughly a 75% reduction in I/O compared to loading naively.

3 Dense FFN-Streaming (large dense models)

For models like Llama 70B where there are no experts to exploit. The attention layers and norms (~8 GB) stay GPU-resident, since they're accessed every token. The FFN weights—around 32 GB of the model—stream through a dynamically-sized pool buffer on NVMe with prefetch lookahead.

This is the harder case. Dense FFNs don't have the sparsity that makes expert-streaming so effective, so the throughput is lower, but the model becomes runnable on hardware that would otherwise OOM.

What's Clever About the Implementation

Several design decisions here are worth calling out individually:

LP + Greedy Placement Solver

Rather than hand-tuning which tensors live where, Hypura runs a placement solver (linear programming + greedy refinement) at startup. It takes access frequency profiles, available memory at each tier, and bandwidth costs as inputs, and assigns every tensor to GPU, RAM, or NVMe accordingly. Hardware profiling means the decisions adapt to your specific machine.

Direct I/O with F_NOCACHE

Reads use F_NOCACHE + pread() to bypass the kernel page cache entirely. Since Hypura is already managing tensor placement explicitly, letting the OS cache the same data in the page cache would just waste RAM that could hold more model weights. This is a small but opinionated detail that shows the project is serious about the memory budget.

Co-Activation Tracking

For MoE models, Hypura records which pairs of experts tend to activate together across tokens. Over time, when expert A fires, it speculatively prefetches the experts most likely to follow based on observed co-activation patterns. This transforms a reactive I/O system into a partially predictive one—and it's the mechanism behind the high cache hit rate.

Ollama-Compatible HTTP Server

Hypura exposes an Ollama-compatible HTTP API, so it drops into any existing toolchain already targeting Ollama without modification. If you've built workflows around Open WebUI, Continue, or any client that speaks Ollama's protocol, Hypura just works as a backend swap.

Practical Performance

The headline numbers from the project are honest about what to expect:

| Model | Hardware | Vanilla llama.cpp | With Hypura |

|---|---|---|---|

| Llama 70B (40 GB) | M1 Max, 32 GB RAM | OOM crash | 0.3 tok/s |

| Mixtral 8x7B | M1 Max, 32 GB RAM | OOM / unusable | 2.2 tok/s |

0.3 tok/s is slow—roughly a character every three seconds. But for local experimentation where you want to run a 70B-class model without paying cloud API costs, inspect its behavior at a specific layer, or test fine-tunes without an internet connection, "runnable" is the bar that matters. Mixtral at 2.2 tok/s is actually usable for short queries.

The Meta-Commentary

The author is candid that most of Hypura's code was LLM-generated through directed prompting. There's a certain recursiveness to this: a tool built to enable running larger local models was itself built by leveraging those models. It's a fair illustration of where the practical value of local inference sits today—not real-time chat, but as a substrate for the kind of exploratory, iterative, offline work that benefits from having a capable model on hand without rate limits or API costs.

Who It's For

Hypura isn't a replacement for cloud inference at scale, and it doesn't pretend to be. The target is anyone who wants to run a frontier-scale model locally for experimentation—fine-tune testing, prompt engineering on a large base model, offline development, or simply satisfying curiosity about how a 70B model responds compared to a 7B one without committing to a paid API.

The NVMe-paging approach also has a natural ceiling: you need a fast SSD (Apple Silicon machines with their unified memory and high-bandwidth storage are a good fit), and the token rate scales inversely with how much of the model has to stream per forward pass. MoE architectures fare better here than dense ones because sparsity is a structural advantage that Hypura can exploit.

Worth keeping an eye on as the project matures—especially if the placement solver gets smarter about adapting to access patterns observed across multiple inference runs rather than just within a single session.